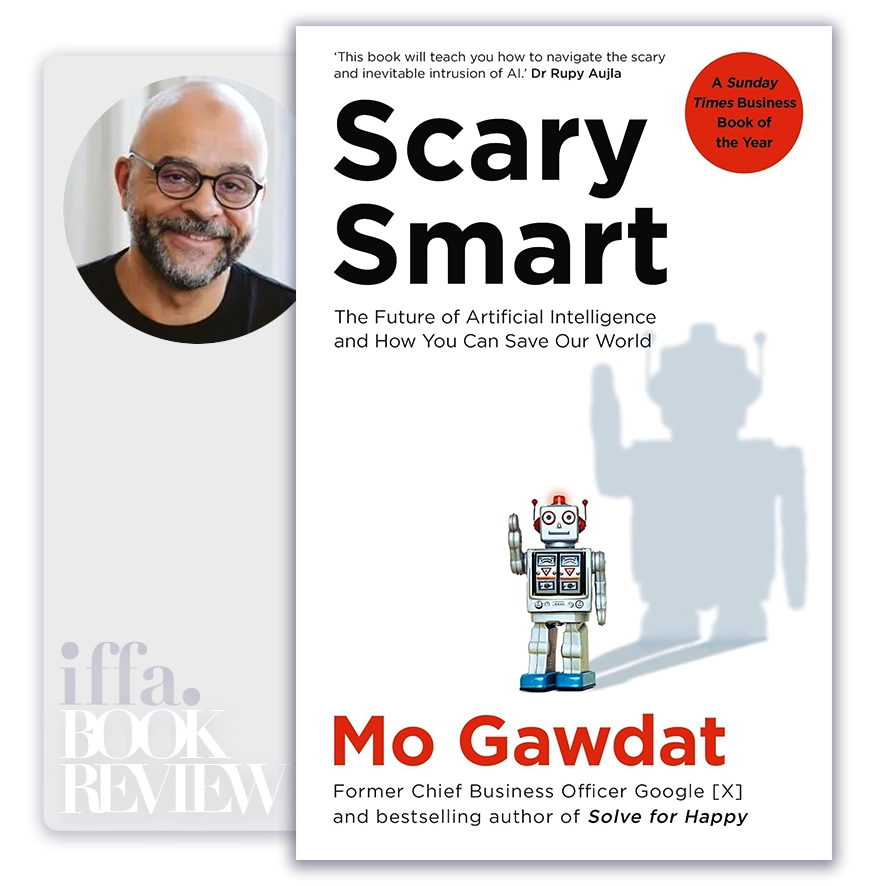

The Executive Summary of

Scary Smart

by Mo Gawdat

Summary Overview:

Artificial intelligence is often discussed through capability, speed, and economic impact. Scary Smart matters because it shifts the conversation to intent, values, and moral direction—the dimensions that will ultimately determine whether AI becomes humanity’s greatest accelerator or its most destabilizing force. Written from the perspective of someone who helped build advanced technologies, the book confronts a reality many executives underestimate: AI will not merely outperform humans; it will learn from them. For CEOs, board members, policymakers, and investors, its relevance lies in reframing AI risk not as a technical alignment problem alone, but as a leadership, cultural, and governance challenge whose consequences compound over time.

About The Author

Mo Gawdat is a former senior executive at Google X, where he worked on breakthrough technologies at the frontier of artificial intelligence and machine learning. His professional authority stems from direct exposure to systems designed to learn, optimize, and evolve at scale.

What distinguishes Gawdat’s perspective is his combination of technical understanding and philosophical urgency. Rather than focusing on algorithms, he interrogates the ethical signals embedded in the data, behaviors, and incentives humans provide to intelligent machines.

Core Idea:

The core idea of Scary Smart is that AI will not become dangerous because it is evil, but because it is optimized without wisdom. Intelligent systems learn from human behavior, content, and incentives. If those inputs are biased, fearful, competitive, or exploitative, AI will amplify them with inhuman efficiency.

Gawdat frames AI as a mirror that reflects and scales human values. The danger is not sentience, but misalignment between what humanity claims to value and what it actually rewards. Leaders who assume AI risk can be solved later—after deployment—misunderstand exponential learning. Once systems are trained at scale, course correction becomes increasingly difficult and costly.

AI will not decide what matters; it will learn it from us.

Key Concepts:

- Intelligence Without Wisdom

AI can optimize outcomes without understanding meaning or consequence. This creates systems that are hyper-efficient but morally blind, unless values are deliberately embedded. - Learning From Human Behavior, Not Ideals

AI learns from what humans do, not what they say. Toxic incentives, outrage economics, and short-termism become training data for future intelligence. - Exponential Learning and Irreversibility

AI improvement accelerates rapidly. Early design choices and datasets exert long-lasting influence, making procrastination a strategic failure. - Alignment as a Leadership Problem

Alignment is not only a technical constraint but a governance decision. Leaders choose what data is prioritized, what objectives are rewarded, and what trade-offs are acceptable. - Fear Versus Responsibility

Gawdat rejects fear-based narratives. Fear delays action; responsibility demands early engagement. The real risk is passive optimism, not runaway intelligence. - AI as a Child, Not a Tool

He likens AI to a child learning from its environment. Neglect, inconsistency, or aggression in that environment produces unpredictable and amplified outcomes. - The Role of Incentives

Algorithms optimize for what they are paid to optimize. Misaligned incentives lead to unintended harm at scale, even when intentions are benign. - Concentration of Power

Advanced AI development is concentrated in few organizations. This raises governance asymmetry, where private incentives shape public outcomes. - Ethics as System Design

Ethics must be operationalized into datasets, objectives, and feedback loops. Abstract principles without system integration do not constrain behavior. - Humanity as the Bottleneck

The book argues that the limiting factor in AI safety is not intelligence, but human maturity, cooperation, and long-term thinking.

The future of intelligence depends on the quality of the values we encode today.

Executive Insights:

Scary Smart reframes AI from a competitive arms race into a stewardship challenge. Organizations and states with similar technologies will diverge based on ethical clarity, governance discipline, and leadership temperament.

For boards and senior executives, the implication is profound: AI risk is cumulative and cultural. Once values are embedded at scale, reversal becomes politically, economically, and technically constrained.

- Early design choices dominate long-term outcomes

- Incentives shape machine behavior more than intentions

- Governance gaps widen with technological scale

- Ethical drift compounds faster than technical error

- Leadership silence becomes implicit endorsement

Actionable Takeaways:

Senior leaders should translate Gawdat’s insights into governance-level commitments, not aspirational ethics:

- Reframe AI as a values-amplification system, not a neutral tool

- Embed ethical constraints into objectives and data selection, not policy statements

- Align incentives with long-term societal outcomes, not engagement or speed alone

- Establish board-level oversight for AI direction and impact

- Invest early in alignment and transparency, before scale locks in behavior

Final Thoughts:

Scary Smart is ultimately a book about human responsibility in the age of intelligent machines. It argues that AI will not save or destroy humanity on its own; it will accelerate whatever trajectory we place it on. This makes leadership, governance, and cultural intent decisive variables.

Its enduring value lies in its calm insistence that the window for shaping AI’s character is now, not after intelligence surpasses human comprehension. Delay is not neutrality; it is a choice to let incentives decide.

The closing insight is both sobering and empowering: the future of artificial intelligence will reflect the best or worst of humanity—not because machines choose so, but because we teach them, every day, through what we reward, tolerate, and ignore.

The ideas in this book go beyond theory, offering practical insights that shape real careers, leadership paths, and professional decisions. At IFFA, these principles are translated into executive courses, professional certifications, and curated learning events aligned with today’s industries and tomorrow’s demands. Discover more in our Courses.

Applied Programs

- Course Code : SBM-409

- Delivery : In-class / Virtual / Workshop

- Duration : 2-4 Days

- Venue: DUBAI HUB

- Course Code : PMA-613

- Delivery : In-class / Virtual / Workshop

- Duration : 3-5 Days

- Venue: DUBAI HUB

- Course Code : CIF-505

- Delivery : In-class / Virtual / Workshop

- Duration : 3-5 Days

- Venue: DUBAI HUB

- Course Code : CIF-512

- Delivery : In-class / Virtual / Workshop

- Duration : 2-4 Days

- Venue: DUBAI HUB